Detectron2 로 Mask R-CNN 학습하기

1. 아나콘다 가상환경 세팅

$ conda create -n detectron2 python==3.8 -y

$ conda activate detectron2

2. PyTorch 설치

https://pytorch.org/get-started/previous-versions/ 에서 CUDA 버전에 맞는 PyTorch 설치

#(CUDA 11.0 기준 Torch v1.7.0)

$ conda install pytorch==1.7.0 torchvision==0.8.0 torchaudio==0.7.0 cudatoolkit=11.0 -c pytorch -y

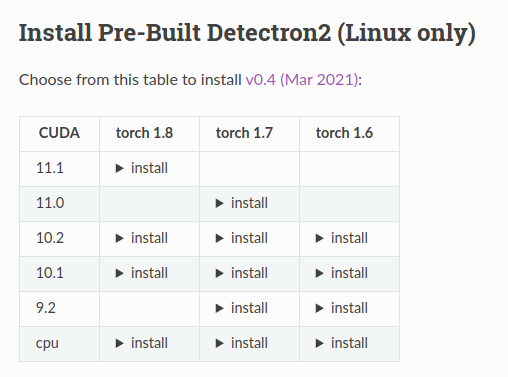

3. Detectron2 설치

기본 조건:

- Linux or macOS with Python ≥ 3.6

- PyTorch ≥ 1.7 and torchvision that matches the PyTorch installation

- OpenCV (optional)

1) Linux

https://detectron2.readthedocs.io/en/latest/tutorials/install.html 에서 CUDA와 Torch 버전에 맞게 Detectron2 설치

# (CUDA 11.0 기준 Torch v1.7.0)

$ python -m pip install detectron2 -f \ https://dl.fbaipublicfiles.com/detectron2/wheels/cu110/torch1.7/index.html

2) macOS

python -m pip install 'git+https://github.com/facebookresearch/detectron2.git'

또는

git clone https://github.com/facebookresearch/detectron2.git

python -m pip install -e detectron2

# On macOS, you may need to prepend the above commands with a few environment variables:

CC=clang CXX=clang++ ARCHFLAGS="-arch x86_64" python -m pip install ...

3) Windows

공식적으로는 support 안함

아래 깃허브에서 Detectron2 Windows Build 가능

https://github.com/conansherry/detectron2

4) 공식적으로 제공하는 Colab Tutorial 를 복사해서 Colab 에서 학습가능

https://colab.research.google.com/drive/16jcaJoc6bCFAQ96jDe2HwtXj7BMD_-m5

4. 나머지 필요한 모듈 설치 (opencv, fvcore)

$ pip install opencv-python

$ pip install -U git+https://github.com/facebookresearch/fvcore.git

5. Dataset 준비하기

1) train-test split

data 폴더 안에 train / val / test 폴더를 만든 후에 train-test split 하고, 이미지 파일(.jpg) 와 라벨 파일 (.json)을 같이 넣어준다.

$ pip install scikit-learnfrom sklearn.model_selection import train_test_split

from glob import glob

import shutil

import os

# 1. 이미지 파일 경로 (뒤에 확장자 포함)

image_files = glob("./data/files/*.JPG")

# 2. 이미지 파일명 가져오기

images = [name.replace(".jpg","") for name in image_files]

#splitting the dataset

#train:val:test = 7:2:1

train_names, test_names = train_test_split(images, test_size=0.3, random_state=777, shuffle=True)

val_names, test_names = train_test_split(test_names, test_size=0.3, random_state=777, shuffle=True)

def batch_move_files(file_list, source_path, destination_path):

for file in file_list:

image = file.split('/')[-1] + '.JPG' # .jpg or jpeg

txt = file.split('/')[-1] + '.json' # .txt or .json

shutil.copy(os.path.join(source_path, image), destination_path)

shutil.copy(os.path.join(source_path, txt), destination_path)

return

# 3. 이미지 파일 경로

source_dir = "./data/files/"

# 4. 분리된 데이터 셋들을 저장할 새로운 경로

test_dir = "./data/test/"

train_dir = "./data/train/"

val_dir = "./data/val/"

os.makedirs(test_dir, exist_ok=True)

os.makedirs(train_dir, exist_ok=True)

os.makedirs(val_dir, exist_ok=True)

batch_move_files(train_names, source_dir, train_dir)

batch_move_files(test_names, source_dir, test_dir)

batch_move_files(val_names, source_dir, val_dir)

2) labelme로 라벨링한 annotation을 coco annotation으로 변경

train.json, val.json, test.json 파일 생성

$ pip install labelmeimport os

import argparse

import json

from labelme import utils

import numpy as np

import glob

import PIL.Image

class labelme2coco(object):

def __init__(self, labelme_json=[], save_json_path="./coco.json"):

"""

:param labelme_json: the list of all labelme json file paths

:param save_json_path: the path to save new json

"""

self.labelme_json = labelme_json

self.save_json_path = save_json_path

self.images = []

self.categories = []

self.annotations = []

self.label = []

self.annID = 1

self.height = 0

self.width = 0

self.save_json()

def data_transfer(self):

for num, json_file in enumerate(self.labelme_json):

with open(json_file, "r") as fp:

data = json.load(fp)

self.images.append(self.image(data, num))

for shapes in data["shapes"]:

label = shapes["label"].split("_")

if label not in self.label:

self.label.append(label)

points = shapes["points"]

self.annotations.append(self.annotation(points, label, num))

self.annID += 1

# Sort all text labels so they are in the same order across data splits.

self.label.sort()

for label in self.label:

self.categories.append(self.category(label))

for annotation in self.annotations:

annotation["category_id"] = self.getcatid(annotation["category_id"])

def image(self, data, num):

image = {}

img = utils.img_b64_to_arr(data["imageData"])

height, width = img.shape[:2]

img = None

image["height"] = height

image["width"] = width

image["id"] = num

image["file_name"] = data["imagePath"].split("/")[-1]

self.height = height

self.width = width

return image

def category(self, label):

category = {}

category["supercategory"] = label[0]

category["id"] = len(self.categories)

category["name"] = label[0]

return category

def annotation(self, points, label, num):

annotation = {}

contour = np.array(points)

x = contour[:, 0]

y = contour[:, 1]

area = 0.5 * np.abs(np.dot(x, np.roll(y, 1)) - np.dot(y, np.roll(x, 1)))

annotation["segmentation"] = [list(np.asarray(points).flatten())]

annotation["iscrowd"] = 0

annotation["area"] = area

annotation["image_id"] = num

annotation["bbox"] = list(map(float, self.getbbox(points)))

annotation["category_id"] = label[0] # self.getcatid(label)

annotation["id"] = self.annID

return annotation

def getcatid(self, label):

for category in self.categories:

if label == category["name"]:

return category["id"]

print("label: {} not in categories: {}.".format(label, self.categories))

exit()

return -1

def getbbox(self, points):

polygons = points

mask = self.polygons_to_mask([self.height, self.width], polygons)

return self.mask2box(mask)

def mask2box(self, mask):

index = np.argwhere(mask == 1)

rows = index[:, 0]

clos = index[:, 1]

left_top_r = np.min(rows) # y

left_top_c = np.min(clos) # x

right_bottom_r = np.max(rows)

right_bottom_c = np.max(clos)

return [

left_top_c,

left_top_r,

right_bottom_c - left_top_c,

right_bottom_r - left_top_r,

]

def polygons_to_mask(self, img_shape, polygons):

mask = np.zeros(img_shape, dtype=np.uint8)

mask = PIL.Image.fromarray(mask)

xy = list(map(tuple, polygons))

PIL.ImageDraw.Draw(mask).polygon(xy=xy, outline=1, fill=1)

mask = np.array(mask, dtype=bool)

return mask

def data2coco(self):

data_coco = {}

data_coco["images"] = self.images

data_coco["categories"] = self.categories

data_coco["annotations"] = self.annotations

return data_coco

def save_json(self):

print("save coco json")

self.data_transfer()

self.data_coco = self.data2coco()

print(self.save_json_path)

os.makedirs(

os.path.dirname(os.path.abspath(self.save_json_path)), exist_ok=True

)

json.dump(self.data_coco, open(self.save_json_path, "w"), indent=4)

if __name__ == "__main__":

import argparse

parser = argparse.ArgumentParser(

description="labelme annotation to coco data json file."

)

parser.add_argument(

"labelme_images",

help="Directory to labelme images and annotation json files.",

type=str,

)

parser.add_argument(

"--output", help="Output json file path.", default="trainval.json"

)

args = parser.parse_args()

labelme_json = glob.glob(os.path.join(args.labelme_images, "*.json"))

labelme2coco(labelme_json, args.output)

6. 학습 코드 작성

Detectron2 에서 제공하는 Colab Beginner Tutorial 베이스로 작성

1) model zoo 에서 사용할 모델 설정 (config file & pretrained weight)

- https://github.com/facebookresearch/detectron2/blob/master/MODEL_ZOO.md 참고

- cfg.merge_from_file(model_zoo.get_config_file("COCO-InstanceSegmentation/mask_rcnn_R_50_FPN_3x.yaml"))

- cfg.MODEL.WEIGHTS = model_zoo.get_checkpoint_url("COCO-InstanceSegmentation/mask_rcnn_R_50_FPN_3x.yaml")

2) Num Workers, Batch Size, Learning Rate, Max Iteration, Num Classes, Test Eval Period (Validation 몇 번에 한번 돌리나) 변경

from detectron2.utils.logger import setup_logger

setup_logger()

import numpy as np

import os

from detectron2 import model_zoo

from detectron2.data.datasets import register_coco_instances

from detectron2.evaluation import COCOEvaluator

from detectron2.engine import DefaultTrainer

from detectron2.config import get_cfg

from detectron2.engine.hooks import HookBase

from detectron2.utils.logger import log_every_n_seconds

from detectron2.data import DatasetMapper, build_detection_test_loader

import detectron2.utils.comm as comm

import torch

import time

import datetime

import logging

register_coco_instances("train", {}, "/data/train.json", "/data/train")

register_coco_instances("val", {}, "/data/val.json", "/data/val")

register_coco_instances("test", {}, "/data/test.json", "/data/test")

cfg = get_cfg()

cfg.merge_from_file(model_zoo.get_config_file("COCO-InstanceSegmentation/mask_rcnn_R_50_FPN_3x.yaml")) #mask_rcnn_R_50_FPN_3x.yaml

cfg.DATASETS.TRAIN = ("train",)

cfg.DATASETS.TEST = ("val",)

cfg.DATALOADER.NUM_WORKERS = 2

cfg.MODEL.WEIGHTS = model_zoo.get_checkpoint_url("COCO-InstanceSegmentation/mask_rcnn_R_50_FPN_3x.yaml")

cfg.SOLVER.IMS_PER_BATCH = 16

cfg.SOLVER.BASE_LR = 0.00025

cfg.SOLVER.MAX_ITER = 6000

cfg.MODEL.ROI_HEADS.NUM_CLASSES = 2

cfg.TEST.EVAL_PERIOD = 500

os.makedirs(cfg.OUTPUT_DIR, exist_ok=True)

trainer = MyTrainer(cfg) #DefaultTrainer(cfg)

trainer.resume_or_load(resume=False)

trainer.train()

3) Default Trainer 를 사용해서 train 가능하지만, Validation process 를 더해주기 위해서 MyTrainer 를 선언해주고 Default Trainer 대신 MyTrainer 사용

- Test Eval Period (Validation 몇 번에 한번 돌리나) 사용

- cfg.TEST.EVAL_PERIOD = 500 이면 6000번의 iteration를 돌때 500번에 한번씩 Validation 진행

class LossEvalHook(HookBase):

def __init__(self, eval_period, model, data_loader):

self._model = model

self._period = eval_period

self._data_loader = data_loader

def _do_loss_eval(self):

# Copying inference_on_dataset from evaluator.py

total = len(self._data_loader)

num_warmup = min(5, total - 1)

start_time = time.perf_counter()

total_compute_time = 0

losses = []

for idx, inputs in enumerate(self._data_loader):

if idx == num_warmup:

start_time = time.perf_counter()

total_compute_time = 0

start_compute_time = time.perf_counter()

if torch.cuda.is_available():

torch.cuda.synchronize()

total_compute_time += time.perf_counter() - start_compute_time

iters_after_start = idx + 1 - num_warmup * int(idx >= num_warmup)

seconds_per_img = total_compute_time / iters_after_start

if idx >= num_warmup * 2 or seconds_per_img > 5:

total_seconds_per_img = (time.perf_counter() - start_time) / iters_after_start

eta = datetime.timedelta(seconds=int(total_seconds_per_img * (total - idx - 1)))

log_every_n_seconds(

logging.INFO,

"Loss on Validation done {}/{}. {:.4f} s / img. ETA={}".format(

idx + 1, total, seconds_per_img, str(eta)

),

n=5,

)

loss_batch = self._get_loss(inputs)

losses.append(loss_batch)

mean_loss = np.mean(losses)

self.trainer.storage.put_scalar('validation_loss', mean_loss)

comm.synchronize()

return losses

def _get_loss(self, data):

# How loss is calculated on train_loop

metrics_dict = self._model(data)

metrics_dict = {

k: v.detach().cpu().item() if isinstance(v, torch.Tensor) else float(v)

for k, v in metrics_dict.items()

}

total_losses_reduced = sum(loss for loss in metrics_dict.values())

return total_losses_reduced

def after_step(self):

next_iter = self.trainer.iter + 1

is_final = next_iter == self.trainer.max_iter

if is_final or (self._period > 0 and next_iter % self._period == 0):

self._do_loss_eval()

self.trainer.storage.put_scalars(timetest=12)

class MyTrainer(DefaultTrainer):

@classmethod

def build_evaluator(cls, cfg, dataset_name, output_folder=None):

if output_folder is None:

output_folder = os.path.join(cfg.OUTPUT_DIR,"inference")

return COCOEvaluator(dataset_name, cfg, True, output_folder)

def build_hooks(self):

hooks = super().build_hooks()

hooks.insert(-1, LossEvalHook(

cfg.TEST.EVAL_PERIOD,

self.model,

build_detection_test_loader(

self.cfg,

self.cfg.DATASETS.TEST[0],

DatasetMapper(self.cfg, True)

)

))

return hooks

4) train.py 실행

- output 폴더가 생성되고 weight가 거기에 자동으로 저장됨

'AI > Others' 카테고리의 다른 글

| [OpenCV] 2개의 이미지를 하나의 윈도우로 보여주는 방법 (0) | 2021.07.02 |

|---|---|

| 'Failed to import pydot. You must `pip install pydot` and install graphviz (0) | 2021.04.26 |

| AttributeError: 'tqdm_notebook' object has no attribute 'disp' (0) | 2021.04.26 |

| Multi GPU로 학습하기 - 리눅스 / Pytorch (0) | 2021.04.12 |

| RTX 3090 Ubuntu 18.04 CUDA, cuDNN (딥러닝 환경 구축) (0) | 2021.03.22 |

댓글

이 글 공유하기

다른 글

-

[OpenCV] 2개의 이미지를 하나의 윈도우로 보여주는 방법

[OpenCV] 2개의 이미지를 하나의 윈도우로 보여주는 방법

2021.07.02 -

'Failed to import pydot. You must `pip install pydot` and install graphviz

'Failed to import pydot. You must `pip install pydot` and install graphviz

2021.04.26 -

AttributeError: 'tqdm_notebook' object has no attribute 'disp'

AttributeError: 'tqdm_notebook' object has no attribute 'disp'

2021.04.26 -

Multi GPU로 학습하기 - 리눅스 / Pytorch

Multi GPU로 학습하기 - 리눅스 / Pytorch

2021.04.12